Site Directive

A downloadable tool for Windows

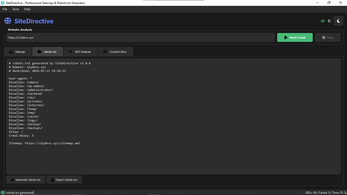

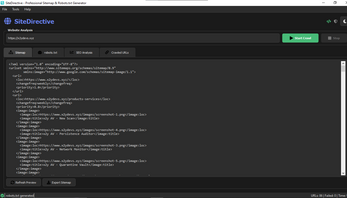

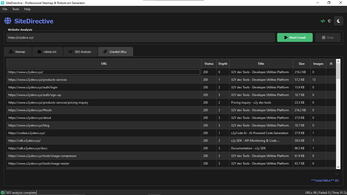

SiteDirective is a professional-grade desktop application designed for web developers, SEO specialists, and digital marketers who require precise control over website indexing and architecture analysis. Built as a high-performance crawling engine, the application automates the discovery of website structures to generate search engine-compliant XML, HTML, JSON, and TXT sitemaps.

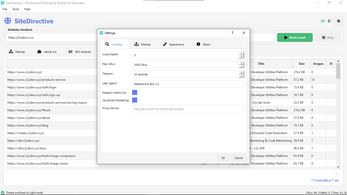

Beyond simple mapping, SiteDirective provides a robust environment for managing robots.txt files, allowing users to define custom allow/disallow rules, crawl-delay directives, and automated sitemap declarations to ensure optimal search engine bot behavior. The core engine is engineered to handle modern web standards, featuring native support for JavaScript rendering to accurately crawl Single Page Applications and dynamic content that traditional crawlers often miss. Users can configure deep-crawl parameters, including custom depth levels, User-Agent spoofing, and rate limiting to balance thoroughness with server stability.

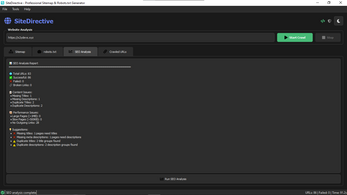

For advanced network environments, the application includes integrated proxy support for HTTP, HTTPS, and SOCKS5 protocols, enabling seamless operation across various geo-restrictions or restricted network configurations. In addition to its generation capabilities, SiteDirective serves as a diagnostic tool for site health. It automatically identifies critical SEO issues such as broken links, missing metadata, duplicate content, and performance bottlenecks caused by oversized or slow-loading pages.

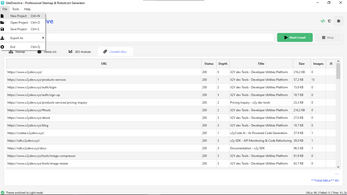

This data is presented through a clean, tabbed interface featuring sortable tables and live progress tracking. Prioritizing data sovereignty and security, the application performs all processing 100% locally on the user's machine, ensuring that sensitive site architectures and project data are never transmitted to the cloud.

Features

Full Website Crawling: Executes real-time fetching and parsing of web pages to map entire site architectures.

JavaScript Rendering: Full support for crawling Single Page Applications (SPAs) built with React, Vue, and Angular.

XML Sitemap Generation: Creates search engine-ready XML files compatible with Google, Bing, and Yandex.

HTML Sitemap Creation: Generates human-readable sitemaps for site navigation and user documentation.

JSON & TXT Exports: Provides developer-friendly formats for API integration and quick URL lists.

Robots.txt Architect: Builds optimized robots.txt files with custom allow/disallow rules.

Crawl Depth Control: Configurable navigation depth ranging from 1 to 10 levels.

Broken Link Detection: Automatically identifies 404 errors and dead internal/external links.

Metadata Audit: Detects missing or duplicate page titles and meta descriptions across the entire domain.

Performance Flagging: Identifies heavy pages (>1MB) and slow-loading URLs to improve Core Web Vitals.

Proxy Server Support: Integrated support for HTTP, HTTPS, and SOCKS5 proxies to bypass geo-restrictions.

User-Agent Rotation: Ability to simulate different browsers or search engine bots during the crawl.

Rate Limiting: Intelligent throttling to prevent server overloads and 429 "Too Many Requests" errors.

Sitemap Discovery: Automatically scans domains to find and analyze existing sitemaps.

Dark & Light Themes: Modern UI with a professional toggle for different lighting environments.

Tabbed Navigation: Organized interface for Sitemap, Robots.txt, SEO Analysis, and Crawled URLs.

Project Persistence: Save and load crawl sessions to track site changes over time

100% Local Processing: Ensures total privacy by keeping all crawl data on your local machine.

Keyboard Shortcuts: Full support for standard Windows shortcuts (Ctrl+N, Ctrl+S, etc.) for rapid workflows.

Zero Telemetry: No background tracking, analytics, or data sharing with external servers.

What's new in this version

What's New in Version 2.0.0

Complete UI Redesign - Modern dark/light theme with professional icons

Real Website Crawling - Actually fetches and parses web pages

SEO Analysis Engine - Detects missing titles, descriptions, and broken links

JavaScript Rendering - Crawls SPAs and dynamic websites properly

Proxy Support - Bypass rate limiting and geo-restrictions

Multiple Export Formats - XML, HTML, JSON, and TXT sitemaps

Intelligent robots.txt - Auto-generates optimized robots.txt files

Performance Optimized - 8-15% CPU usage on 8GB RAM systems

Professional UI - Clean, intuitive interface with tabbed navigation

Site Directive is developed and maintained by x2y Devs Tools. For support, visit x2ydevs.xyz.

| Updated | 3 days ago |

| Published | 4 days ago |

| Status | Released |

| Category | Tool |

| Platforms | Windows |

| Author | x2y Devs Tool |

| Tags | apps, crawler, indexer, new, Robots, site-directive, sitemap, x2y-devs-tools |

| Content | No generative AI was used |

Purchase

In order to download this tool you must purchase it at or above the minimum price of $4 USD. You will get access to the following files: